Chatbot Winter

[avatar user=”malm” size=”small” align=”left” link=”file” /]

It proved a lot harder than Facebook thought to make a success of their human-assisted AI (HAAI) M personal assistant. TechCrunch are reporting M is now being shut down in what has to constitute a real blow for the scalability of HAAI propositions:

M’s secret sauce wasn’t artificial intelligence — it was good old humans. Behind the scenes, M relied on humans to answer the most complicated queries. For instance, you could book a table at a restaurant, order flowers, organize your next vacation and more using M. Facebook leveraged its acquisition of Wit.ai to develop M.

M was first mentioned on this blog as a service to watch out for three years ago. And as recently as 15 months ago being talked up as the vanguard of a new ecosystem by the likes of the Economist. Wired however point out the real issue – it’s not possible to remove humans from the HAAI loop and scaling human agency isn’t really what this was supposed to be about:

One source familiar with the program estimates M never surpassed 30 percent automation. Last spring, M’s leaders admitted the problems they were trying to solve were more difficult than they’d initially realized.

It was easy for M’s leaders to win internal support and resources for the project in 2015, when chatbots felt novel and full of possibility. But as it became clear that M would always require a sizable workforce of expensive humans, the idea of expanding the service to a broader audience became less viable.

When M could complete tasks, users asked for progressively harder tasks. A fully automated M would have to do things far beyond the capabilities of existing machine learning technology. Today’s best algorithms are a long way from being able to really understand all the nuances of natural language.

The spectacular success of Alexa has been a key factor in undermining chatbots. Wired published a good article on the extent to which Amazon’s AVS (Alexa Voice Services) team have thought through how to scale Alexa with 3P (third-party) manufacturers. Amazon’s discipline in working with OEMs and growing the Alexa skills ecosystem contrasts starkly with the confusing efforts made by Facebook to integrate M:

if you want to Alexa-enable your product, you just go shopping. Amazon offers seven different development kits for a few hundred dollars apiece, each with a specific product type in mind. The first one Amazon built had two mics in a line; a new one has seven laid out in a ring exactly like the Echo. “It’s the same mic array, the same technology in terms of the algorithms and wake word engine,” says Al Woo, a product manager on the AVS team, holding up the Echo-like kit. “If a company wants to develop a product that matches as closely as possible to the performance and function of an Echo device, this is how.” The gizmo in his hand has a fully exposed motherboard and wires dangling everywhere, but Alexa’s already up and running. With it, developers can have a demo-ready Alexa integration in just half an hour.

The dream of unsupervised AI chatbots perhaps now mediated through hardware like the Echo one suspects will endure driven by Hollywood as well as the Valley.

Artificial Intelligence

Google’s latest state of the art in natural text to speech synthesis, tacotron 2, which leverages RNNs is astonishing. Relatedly, this arxiv paper outlines the major advances in RNN over the last ten or so years and some of the key areas of application where they are being used today including speech recognition, speech synthesis, machine vision, and video description generation.

Even so, it’s not enough to pursuade OSS voice assistant outfit mycroft.ai to stick with Google’s voice services. CTO Steve Penrod outlines why they’ve switched to Baidu DeepSpeech in a blog post fascinating on multiple levels:

We’ve assisted with Project Common Voice and are creating a new mechanism allowing Mycroft users to participate in building the Open Dataset to provide more real-world data for use in training to improve the system.

At the beginning of the summer the word-error-rate for DeepSpeech was at around 15%. By the fall it was at 10% and it is continuing to improve as more training data is digested. This is now in the accuracy realm needed for a voice assistant.

Deep Mind keep going from strength to strength in applying Deep Learning to health scenarios. Scientific American survey the prospects for retinal medicine:

Eyes are said to be the window to the soul—but researchers at Google see them as indicators of a person’s health. The technology giant is using deep learning to predict a person’s blood pressure, age and smoking status by analysing a photograph of their retina. Google’s computers glean clues from the arrangement of blood vessels—and a preliminary study suggests that the machines can use this information to predict whether someone is at risk of an impending heart attack.

Deep Mind’s amazing 2017 in review includes NHS monitoring app Streams as a highlight:

And this post outlines 30 amazing machine learning projects of the last year including several deep-photo style transfer propositions. There’s a link to a great-looking Udemy course on TensorFlow. One could spend years learning everything going on now only to find it out of date by the time you got there. One sympathises with this blogger who expresses guilt over data science and machine learning Imposter Syndrome:

After reading a bunch of job postings, I figured out that all it will take to become a real data scientist is five PhD’s and 87 years of job experience.

Applied machine learning at Facebook from the perspective of the infrastructure requirements. They use Caffe2 for supporting production machine learning models and PyTorch for development.

Bloomberg consider the prospects for robot farming which could free humanity from its legacy of carrying buckets of water to fields something that has been commonplace for most humans that lived over the last ten thousand years.

Software

Gitops and high-velocity continuous integration and continuous deployment at Weave using Kubernetes and Docker. The key to it is using Git to store operational state and then having automated build and release pipelines

What exactly is GitOps? By using Git as our source of truth, we can operate almost everything. For example, version control, history, peer review, and rollback happen through Git without needing to poke around with tools like kubectl.

- Our provisioning of AWS resources and deployment of k8s is declarative

- Our entire system state is under version control and described in a single Git repository

- Operational changes are made by pull request (plus build & release pipelines)

- Diff tools detect any divergence and notify us via Slack alerts; and sync tools enable convergence

- Rollback and audit logs are also provided via Git

How to deploy a new Python package to PyPI.

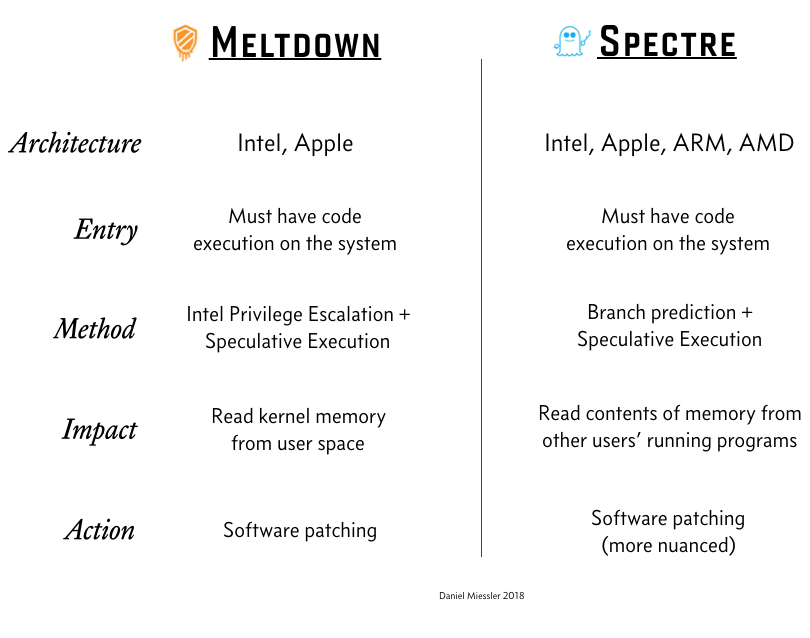

This InfoQ post on Spectre and Meltdown is a useful addition to the reams that have been written on the topic:

CES

The annual bloatfest of tech that is CES took place this week in Las Vegas. Reactions were mixed with much of the media focus on voice assistants and screen technology. Google were everywhere pushing Google Home. Amazon with Alexa were also a major presence though more through their OEM partners than directly. Wired’s gallery of the best new gadgets that were outed include The Wall from Samsung, a stunning move on from The Frame in the burgeoning arena of tech lifestyle furniture:

Samsung and LG extensively purloined Vincent Van Gogh’s back catalogue to showcase their display brilliance and voice controlled smart TVs were the order of the day. In LG’s case it extended to some remarkable rollable screen technology. However, neither Bixby nor Alexa which were being demo’d in the main hall when it happened, could rescue CES from a huge power outage part-caused one assumes by all those screens.

Meanwhile further out east leaving Las Vegas lies a reminder in the Hoover Dam of an era 80 years ago when a huge American civic construction project captured the imagination of the world and served as a beacon of inspiration not something that divided people:

The art deco exterior is almost socialist in spirit. There is real American Beauty here:

Over in Fremont, an absolutely colossal curved screen displayed entertainment on a grand scale on the hour complete with zipwire and crowds of local Las Vegans enjoying themselves. Spectacular:

The Silicon Valley Backlash

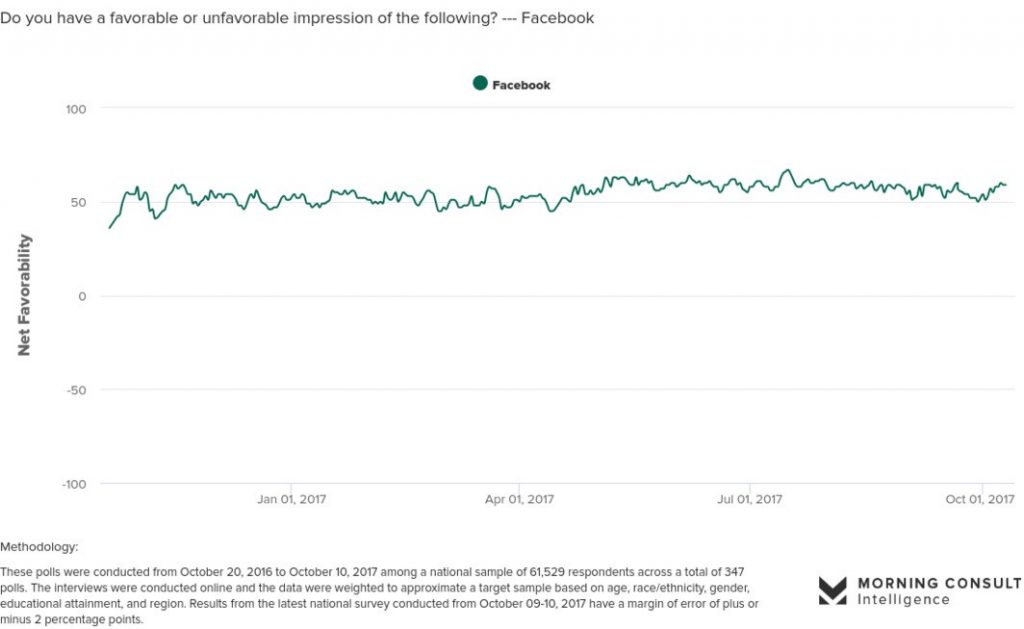

Wired on why you want to forget what you’ve read about the tech backlash. The GAFA quartet are still riding super high in consumer polls. It’s not sensible, however much you might want to, to bet against Facebook:

Still, the steady drip of tech disillusion posts keep coming. Here’s one from the Guardian on digital detox at the start of the year. Not sure about the equivalence of these ground rules though:

- Delete all social media apps from your phone; check these only from a desktop computer.

- Turn all banner-style/pop-up/sound notifications off all other apps (keep the badge-type notifications where you have to visually check the app).

- Leave your phone in your pocket or keep it out of sight for meetings/get-togethers/conversations/meals involving other people.

- Keep your phone out of sight during your commute.

- Don’t take your phone with you into the bathroom or toilet.

And there are other darker forces at work in the Valley. Bloomberg are reporting that Chinese workers are abandoning the US for riches back home. And Quartz report on Silicon Valley sexual harassers asking for a second chance:

People who permanently derail women’s careers, and inflict psychological damage on victims, should not expect to get a second chance, let alone be praised for their mea culpas.