Post-Work

[avatar user=”malm” size=”small” align=”left” link=”file” /]

“Either automation or the environment, or both, will force the way society thinks about work to change”

The Refusal of Work by David Frayne

This Guardian long read on work expands on some themes covered here before. The main assertion of the article is that with the twin threats of technological unemployment and the sixth extinction looming monstrous overshadowing everything else, it is becoming plain that:

work is not working, for ever more people, in ever more ways. We resist acknowledging these as more than isolated problems – such is work’s centrality to our belief systems – but the evidence of its failures is all around us.

Universal Basic Income (UBI) is seen as a key part of the answer by many radical thinkers but is still too unacceptable for many in the mainstream to entertain. One suspects there’s still a long way down to go yet before it does so – there are too many vested interests that want people to work harder still and in more quantified ways than were ever imaginable by even Orwell. Evidence from the recent past suggests that defocussing on work can have many positive impacts on the arts and creativity. Even the chaotic early 1970’s had an upside in that respect as non-work life expanded and “audiences trebled for late-night BBC radio DJs such as John Peel“. Who knows what magic was created from the liberated candlelit evenings of 3-day weeks.

Millennials in work today are entitled to be skeptical about the psychological contract they have. The idea that if you work hard and save up, you can buy yourself a future like your parents did is simply not viable any more:

For many people, not just the very wealthy, work has become less important financially than inheriting money or owning a home.

Quartz’s work blog points out that the likeliest way to get Millennials to do longer hours is not to pay them more but try and introduce opportunities to engage their interest in social causes and, implicitly, align work with personal passions.

Interestingly, that seems to be something that Mustafa Suleyman, one of the founders of Deep Mind, one of the outriders of technological unemployment, appears to have done based on this recent profile:

it was clear to me that we needed new institutions, creativity and knowledge in order to navigate the growing complexity of our social systems. Reapplying existing human knowledge was not going to be enough. Starting a new kind of organisation with the single purpose of building AI and using it to solve the world’s toughest problems was our best shot at having a transformative, large scale impact on society’s most pressing challenges.”

Machine Learning and Artificial Intelligence

Gary Marcus takes his Deep Learning skepticism highlighted a couple of weeks ago one stage further claiming the discipline “may never create a general purpose AI” making an important point that the use of the word “deep” has unfortunate overtones that don’t reflect what’s truly going on:

Marcus points out that the ‘deep’ in ‘deep learning’ refers to the architectural nature of the system – meaning the number of layers in today’s neural networks – rather than the conceptual notion of depth. “The representations acquired by such networks don’t, for example, naturally apply to abstract concepts like ‘justice’, ‘democracy’ or ‘meddling’”, he writes, arguing that there is a superficiality to some patterns extracted by the technique.

The reality in his view is much more quotidian and echoes the commentary last week on M’s demise where it’s still very much the Wizard is Oz behind the curtain:

The disappointment around M largely derives from the fact that it attempted a new approach: instead of depending solely on AI, the service introduced a human layer – the AI was supervised by human beings. If the machine received a question or task it was incapable of answering or responding to, a human would step in. In that way, the human being would act to further train the algorithm. … we were promised general purpose artificial intelligence, and we got a home assistant that looks at the contents of our fridge and tells us to make a sandwich. What a time to be alive.

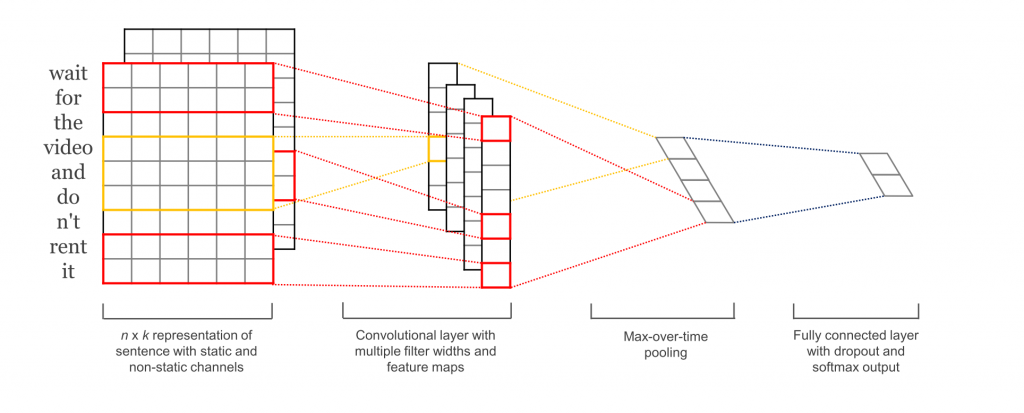

OReilly post outlining how convolutional neural nets (CNNs) can be used to classify text, something that have more recently become a standard use case for recurrent neural nets (RNNs) using long short-term memory (LSTM) support:

Amazon

The Information suggest that Amazon intends for AWS to make no-code proposition for AWS “to enable business users who aren’t engineers to build customized apps solving specific problems, merely by dragging and dropping app components into a graphical interface“:

AWS is hoping a low-code platform would attract non-engineers within organizations that already use AWS, according to two people who the company has briefed on the cloud unit’s plans. That’s one reason why AWS tapped Adam Bosworth, an industry veteran who joined the cloud unit in August 2016, to oversee development of the low-code platform, said the two people. Mr. Bosworth previously worked at Salesforce, Google and Microsoft and has deep experience in developing productivity applications and development platforms.

NYT profile the Amazon Go store which just opened in Seattle. Seen by many as the harbinger of technological unemployment for Retail, it does indeed feel very odd and disconcerting the first time you use it without any human interaction:

At Amazon Go, checking out feels like — there’s no other way to put it — shoplifting. It is only a few minutes after walking out of the store, when Amazon sends an electronic receipt for purchases, that the feeling goes away.

However, that isn’t the full story. Turns out there are some things that still need humans in the loop:

Because there are no cashiers, an employee sits in the wine and beer section of the store, checking I.D.s before customers can take alcohol off the shelves.

Security

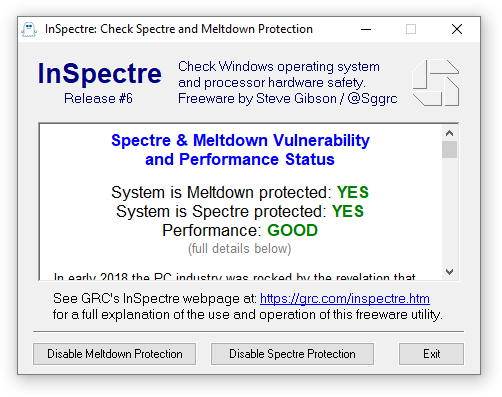

Gibson Research have released Inspectre, a tool in Shields-Up vein for testing whether your local Windows machine is protected or not:

The UI for the missile alert system in Hawaii that created chaos and panic with a false alert is as bad as you might expect. It highlights one of the most worrying aspects of computer security. Namely what if nuclear annihilation is the result of fat finger syndrome?

This is the screen that set off the ballistic missile alert on Saturday. The operator clicked the PACOM (CDW) State Only link. The drill link is the one that was supposed to be clicked. #Hawaii pic.twitter.com/lDVnqUmyHa

— Honolulu Civil Beat (@CivilBeat) January 16, 2018

And as if that wasn’t worry enough, it will become impossibly difficult to tell the real from the fake voice in the very near future which is ushering in an entirely new desperate security arms race between technology companies and those who would exploit:

In 2018, fears of fake news will pale in comparison to new technology that can fake the human voice. This could create security nightmares. Worse still, it could strip away from each of us a part of our uniqueness.

Blockchain

Steven Johnson’s NYT post on life beyond the Bitcoin bubble is a fascinating read particularly in the way it links progress in fixing the Internet with fixing a broken architecture by making identity a core part of the protocols using blockchain principles:

If there’s one thing we’ve learned from the recent history of the internet, it’s that seemingly esoteric decisions about software architecture can unleash profound global forces once the technology moves into wider circulation. … The real promise of these new [blockchain] technologies, many of their evangelists believe, lies not in displacing our currencies but in replacing much of what we now think of as the internet, while at the same time returning the online world to a more decentralized and egalitarian system. If you believe the evangelists, the blockchain is the future. But it is also a way of getting back to the internet’s roots.

Ultimately it’s going to take new code not regulation to get us out of here:

If you think the internet is not working in its current incarnation, you can’t change the system through think-pieces and F.C.C. regulations alone. You need new code.

Software

This article written from a tax accountancy perspective explains why treating software as a capital expenditure can offset costs and “help make a company look more profitable than it really is“. However, the author goes on to emphasise that on every other level “capitalising software development makes no sense on a practical or intellectual level” because:

if you capitalise your software expenditure, you can’t claim R&D enhancement for it – so you lose out on two fronts: you pay more tax, and you don’t get tax credits.

Unimatrix.py is a Python3 command-line script that uses ncurses to recreate a variety of displays inspired by The Matrix in your terminal window. Just worked for me on my Mac.

Quoran asks what’s the best piece of source code to read? One of the better answers suggests starting with Peter Norvig’s famous Python spelling corrector which remains a marvel of list comprehension-driven poetic elegance:

import re

from collections import Counter

def words(text): return re.findall(r'\w+', text.lower())

WORDS = Counter(words(open('big.txt').read()))

def P(word, N=sum(WORDS.values())):

"Probability of `word`."

return WORDS[word] / N

def correction(word):

"Most probable spelling correction for word."

return max(candidates(word), key=P)

def candidates(word):

"Generate possible spelling corrections for word."

return (known([word]) or known(edits1(word)) or known(edits2(word)) or [word])

def known(words):

"The subset of `words` that appear in the dictionary of WORDS."

return set(w for w in words if w in WORDS)

def edits1(word):

"All edits that are one edit away from `word`."

letters = 'abcdefghijklmnopqrstuvwxyz'

splits = [(word[:i], word[i:]) for i in range(len(word) + 1)]

deletes = [L + R[1:] for L, R in splits if R]

transposes = [L + R[1] + R[0] + R[2:] for L, R in splits if len(R)>1]

replaces = [L + c + R[1:] for L, R in splits if R for c in letters]

inserts = [L + c + R for L, R in splits for c in letters]

return set(deletes + transposes + replaces + inserts)

def edits2(word):

"All edits that are two edits away from `word`."

return (e2 for e1 in edits1(word) for e2 in edits1(e1))

correction('speling')