Sections

Politics, Truth, Lies and Statistics

[avatar user=”malm” size=”small” align=”left” link=”file” /]

“If you tell a lie big enough and keep repeating it, people will eventually come to believe it. The lie can be maintained only for such time as the State can shield the people from the political, economic and/or military consequences of the lie. It thus becomes vitally important for the State to use all of its powers to repress dissent, for the truth is the mortal enemy of the lie, and thus by extension, the truth is the greatest enemy of the State.”

Joseph Goebbels

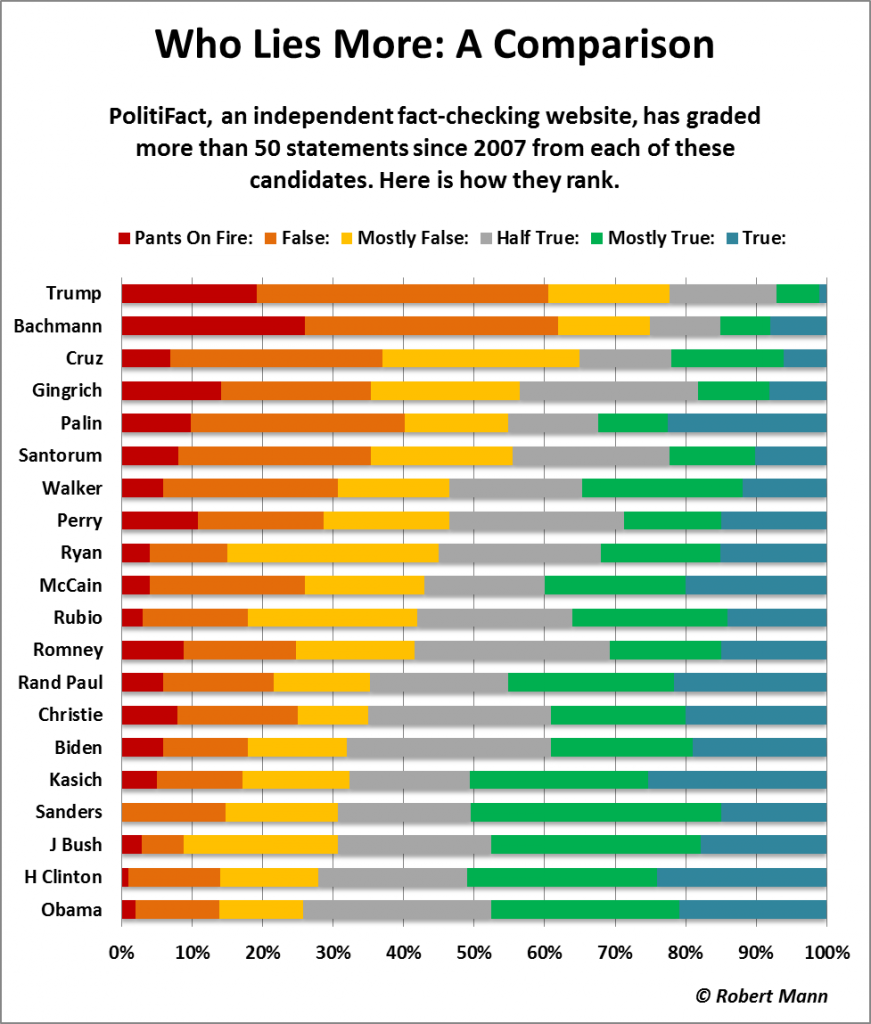

Donald Trump seems to have taken the lie to new heights (or depths) if this analysis by PolitiFact is to be believed. It suggests his campaign approach is far more fiction than fact with substantially less than 10% of the dataset constituting “true” or “mostly true” assertions on his part. It stands in stark contrast to the scores from Clinton and Obama at the other end of the spectrum. Having said that, the data suggests every politician is guilty of at least some degree of economy with the truth:

In last week’s blog I suggested that irrespective of evidence for mass scale dissembling or what many commentators perceive as huge campaign mistakes and an obvious dark narcissism and inability to connect with anyone other than himself, it is still all too plausible that Donald Trump will still win the US election. This week, Michael Moore concurred, providing a similar gloomy assessment on proceedings:

don’t fool yourself — no amount of compelling Hillary TV ads, or outfacting him in the debates or Libertarians siphoning votes away from Trump is going to stop his mojo.

He provides five reasons why including dislike of Clinton, anti-PC sentiment, Rust Belt Brexit maths to obstacles placed in the way of ‘natural’ Clinton supporters to deter or prevent them from voting. Whether or not the candidate tells the truth doesn’t seem to make it into his list. What does is perhaps the most chilling reason of all – the morbid fascination of millions of voters contemplating a binary choice in private quietude in a small booth in a little over three months time drawn to stick their finger in the fan blade of history just to see what happens:

do not discount the electorate’s ability to be mischievous or underestimate how any millions fancy themselves as closet anarchists once they draw the curtain and are all alone in the voting booth. It’s one of the few places left in society where there are no security cameras, no listening devices, no spouses, no kids, no boss, no cops, there’s not even a friggin’ time limit. You can take as long as you need in there and no one can make you do anything. You can push the button and vote a straight party line, or you can write in Mickey Mouse and Donald Duck. There are no rules. And because of that, and the anger that so many have toward a broken political system, millions are going to vote for Trump not because they agree with him, not because they like his bigotry or ego, but just because they can.

The spectre of a globally rising tide of authoritarianism will be there with them, unacknowledged or not, when they make their choice. Trump is clearly considerably enamoured by Putin’s no-nonsense approach on this. The Russian leader has been pushing back boundaries for years in some cases in areas that Trump seems outwardly more comfortable with, at least for now and adept at practising the dark cynical arts of Maskirovka or military deception. In some senses the outcome of the election is best viewed as a milestone rather than the end of a journey. Trump’s candidacy alone is arguably the logical endpoint of a 30-year war against government that the GOP has been fighting. Irrespective of whether he wins or loses, he and his supporters aren’t going to go away. They will be invigorated and emboldened by victory or defeat alike. A Trump victory would be the crowning achievement of post-factual populism, the triumph of a version of Maskirovka manufactured in the West. It will represent a genuine crisis for supporters of a progressive data-led rationalism who have largely had it their way since the Second World War. However a Clinton presidency arguably won’t take the US to sunlit uplands either. If she wins she can expect a brutal rearguard insurrection by obstruction-minded opponents who will maintain that she should be indicted.

As highlighted in a recent post, history has many examples of cyclic reversals of fortune for a prevailing hegemony, a ‘creative destruction’ of the status quo. According to this stark analysis courtesy of Tobias Stone, we may be living through a Fourth Turning right now and it may well not turn out well for many. Trump famously isn’t much of a reader so may not be aware of the context:

The people who see that open societies, being nice to other people, not being racist, not fighting wars, is a better way to live, they generally end up losing these fights. They don’t fight dirty. They are terrible at appealing to the populace. They are less violent, so end up in prisons, camps, and graves. … Trump and Putin supporters don’t read the Guardian, so writing there is just reassuring our friends. We need to find a way to bridge from our closed groups to other closed groups, try to cross the ever widening social divides.

Sticking with the tech agenda, The Information published a handy comparison of where the two candidates stand on tech issues. As if to underline some of the above points on the changing direction of political wind, it revealed some interesting similarities in position between them, for instance on the trade deal Obama has negotiated with China:

Between privacy, labor laws, trade, immigration and net neutrality, there’s a lot at stake for tech in this election. The candidates haven’t given extensive details, but definite differences have emerged, with Hillary Clinton explicitly taking up many of tech’s causes.

Brexit

The Tobias Stone analysis above is primarily concerned with Brexit. It provides a route map for how a seemingly local political decision could constitute the “shot heard around the world” that triggers a World War when combined with other developments.

The disquiet and unease in our ‘perilous’ direction of travel is something that Stephen Hawking taps into in this article in which he suggests we need to move on from the decision and focus instead on “changing the general attitude towards money that helped foster such a division in the first place“:

People are starting to question the value of pure wealth. Is knowledge or experience more important than money? Can possessions stand in the way of fulfilment? Can we truly own anything, or are we just transient custodians?”

We could do everyone a favour by starting that line of questioning right here and right now with BHS and Sports Direct. In an excoriating piece on “broken Britain”, Guardian journalist Aditya Chakrabortty skewers the vastly overrated “industry titans” presiding over catastrophic corporate mismanagement so extreme that it may well yet lead to the severest of penalties. One suspects that the populace at large demands no less:

Britain is the finance capital of the world, and these are some of the biggest names in the industry. Yet Monday’s report finds them “culpable” of cashing the cheques and being conveniently blind to massive corporate failure. In that respect, what Field and his colleagues have done is torn down one of the delusions about post-industrial Britain. From the London Whale to the Libor scandal to BHS, what the City really leads the way in is not ingenuity or innovation, but in being the no-questions-asked SpivZone of financial markets.

Machine Learning and Analytics

William Hague wrote a thoughtful opinion piece in the Telegraph which was surprisingly insightful, at least for a professional politician, on the challenges the UK faces in the coming era of technological unemployment and the urgent need to focus on job creation in yet-to-be-created new industries:

“An industrial strategy for the next few decades would mean providing the research base, world-class infrastructure and skills in concentrated areas necessary to attract innovators and jobs that haven’t been invented yet.”

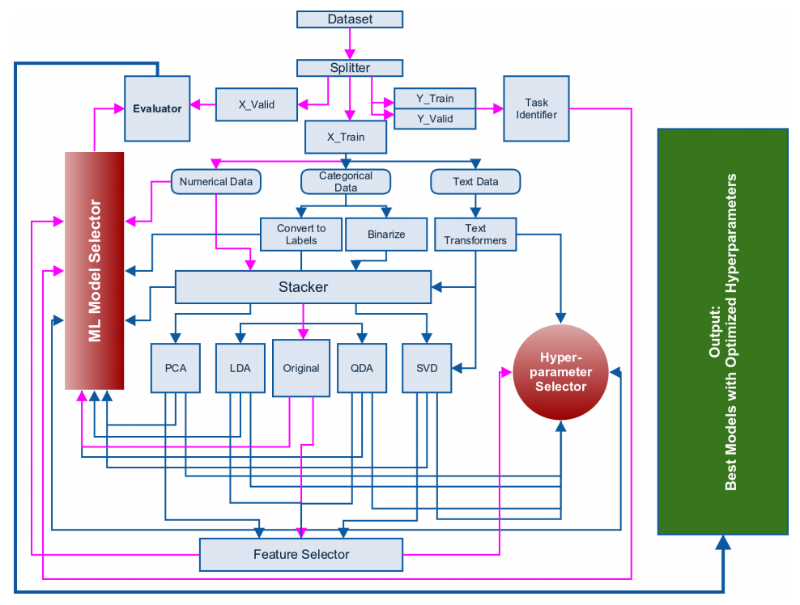

Artificial Intelligence and Machine Learning will likely underpin many of these new positions. If it’s an area relevant to you, it’s worth checking out this great advice from Abishek Thakur, a Kaggle Grandmaster, on ‘how to approach almost any machine learning problem‘. In it he outlines his go-to competition machine learning Python tookit – most of these tools have been showcased or used in some way in this blog over the last year or so:

- To see and do operations on data: pandas

- For all kinds of machine learning models: scikit-learn

- The best gradient boosting library: xgboost

- For neural networks: keras

- For plotting data: matplotlib

- To monitor progress: tqdm

It turns out that for Kaggle competitions your key ‘desert island tool’ is basically the collection of data utilities and classifiers in scikit-learn. Here is Thakur’s flowchart for how to break down a dataset into a form that can be digested by scikit-learn and turned into a model accurate enough to do the business:

TensorFlow isn’t explicitly in Thakur’s list. It’s essentially encapsulated within the Keras reference as highlighted last week. However, it remains a vital tool particularly in relation to Deep Learning. This recently found blog post introduces TensorFlow in the more familiar standard supervised learning classifier context. The corresponding source repository and sample data are available on Github here. A standard TensorFlow tutorial forms a companion piece in outlining how to use TensorFlow to analyse the classic MNIST dataset using standard classifiers and building upon those with “Deep MNIST”. This is the same dataset used by Andrew Ng in his famous Machine Learning MOOC.

Adrian Rosebrock provides a handy primer explaining the elements of convolutional neural network image processing from first principles using OpenCV and scikit-image. His summary is a good starting point for explaining the post:

In today’s blog post, we discussed image kernels and convolutions. If we think of an image as a big matrix, then an image kernel is just a tiny matrix that sits on top of the image. … This kernel then slides from left-to-right and top-to-bottom, computing the sum of element-wise multiplications between the input image and the kernel along the way — we call this value the kernel output. The kernel output is then stored in an output image at the same (x, y)-coordinates as the input image (after accounting for any padding to ensure the output image has the same dimensions as the input). … Given our newfound knowledge of convolutions, we defined an OpenCV and Python function to apply a series of kernels to an image. These operators allowed us to blur an image, sharpen it, and detect edges.

This excellent HBR article outlines the four mistakes managers make with analytics. It’s worth explicitly calling them out with supporting quotes from the authors:

- Not Understanding the Issues of Integration: “the variety of sources can make it difficult for firms to actually save money or create value for customers”

- Not Realizing the Limits of Unstructured Data. It is actually very difficult to gain insight from most forms of unstructured data because aside from text-based data “other forms such as video data are still not easily analyzed”.

- Assuming Correlations Mean Something: “Very large data sets usually contain a number of very similar observations that can lead to spurious correlations and as a result mislead managers in their decision-making”

- Underestimating the Labor Skills Needed: “Companies that assume large volumes of data can be translated into insights without hiring employees with the ability to trace causal effects in that data are likely to be disappointed.”

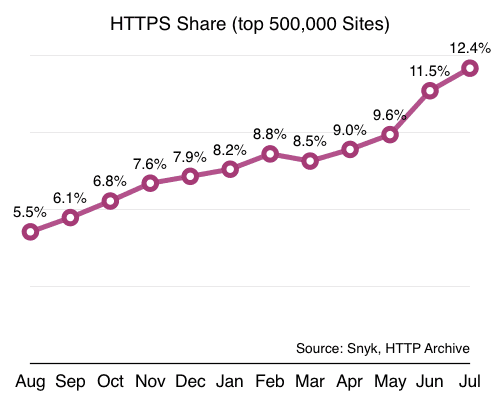

Security

HTTPS adoption has more than doubled in the last year according to this analysis. In terms of reasons why, both tools like LetsEncrypt and incentives such as Google’s SEO boost for HTTPS sites are cited. My adventures in enabling it on this site at the tail end of 2015 are outlined in some detail here.

A LastPass browser vulnerability potentially allowing an attacker access to encrypted vault data was widely reported this week. It appears to affect Firefox users only but clearly it is a major concern that such an important app could potentially be exploited.

Apps and Services

Andreessen Horowitz post on how WeChat successfully built mobile payments into their messaging service by taking advantage of an important cultural meme in China, namely the red envelope:

Red envelopes were WeChat’s secret weapon in getting users to adopt mobile payments on their messaging platform, unlocking all subsequent transaction activity across their ecosystem.

Facebook Messenger now has 1 billion MAU:

John A De Goes has written a blunt and startlingly brutal indictment of Twitter’s fundamental product problem which he suggests can only be solved by a company other than Twitter:

John A De Goes has written a blunt and startlingly brutal indictment of Twitter’s fundamental product problem which he suggests can only be solved by a company other than Twitter:

The root problem with Twitter is that the product is carefully engineered to cultivate maximum violence. … Not intentionally, of course, but rather through a combination of early product decisions that were not re-visited, together with blind optimization of Twitter’s game mechanics toward vanity metrics. … Twitter’s cultivation of violence, in turn, affects user engagement, user churn, the demographics of Twitter, and numerous other factors that have resulted in Twitter’s total failure to become a behemoth of social media.

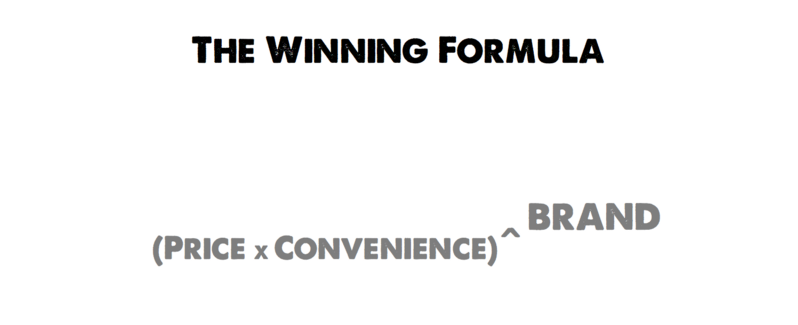

The acquisition of Dollar Shave Club by Unilever for $1 billion arguably represents the “appification of FMCG” and has profound implications for the role and importance of brand in scaling the value of future startups in this consumer goods space:

If you haven’t seen the memorable first video they ever did, it’s worth taking a look now:

Benedict Evans declared the smartphone platform wars over. Both Android and iOS won though the perception of overall division of spoils in terms of device numbers remains surprisingly wonky. Here is his assessment of the final scores:

- 630m iPhones and 250m iPads, for a total of 880m

- 1.3-1.4bn Google Android phones, and 150-200m Google Android tablets.

- Maybe 450m additional Android phones and 200m Android tablets in China, not connected to Google services

- For a total base of 2.4-2.5bn iOS and Android phones, and (say) 600m-750m iOS and Android tablets.

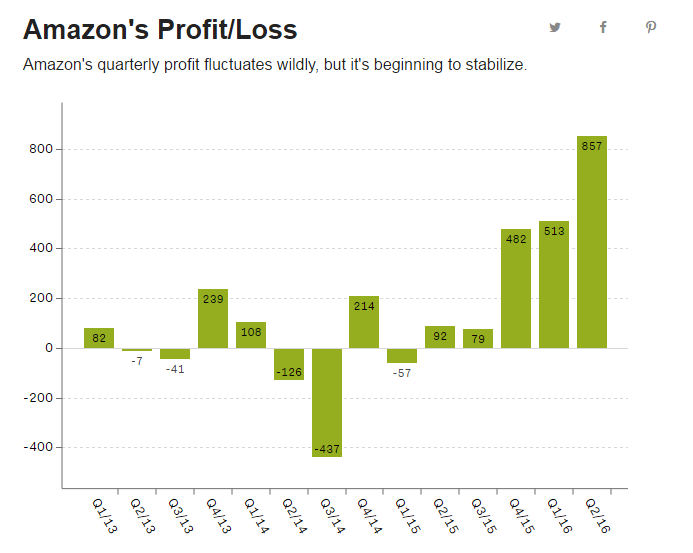

The Verge explain why Amazon spectacular quarterly results represent a company in the rudest of health.

And they are seeking to capitalise on their growth expanding their machine learning capabilities with the opening of a new site dedicated to the discipline in Turin, Italy.

Mobile and Devices

CNX are reporting a $30 Geiger Counter for iOS and Android.

Engadget go hands on with the $99 Mobvoi Ticwatch 2 smartwatch and found much to like with the unique Chinese proposition, particularly the compatibility with Android Wear ecosystem:

To me, the biggest selling point of the Ticwatch is its promised compatibility with all Android Wear apps. Despite running the company’s own Ticwear 4.0 platform, the Ticwatch has a compatibility mode that lets you connect the watch to the Android Wear app and install programs from there.

These ten apps are “killing your phone”. The summary here seems to be that polling for messages across multiple providers is going to be harmful:

NYT explain how to get more out of Alexa and Echo. It’s all down to adding more third party “skills” plugins.

Software and Architecture

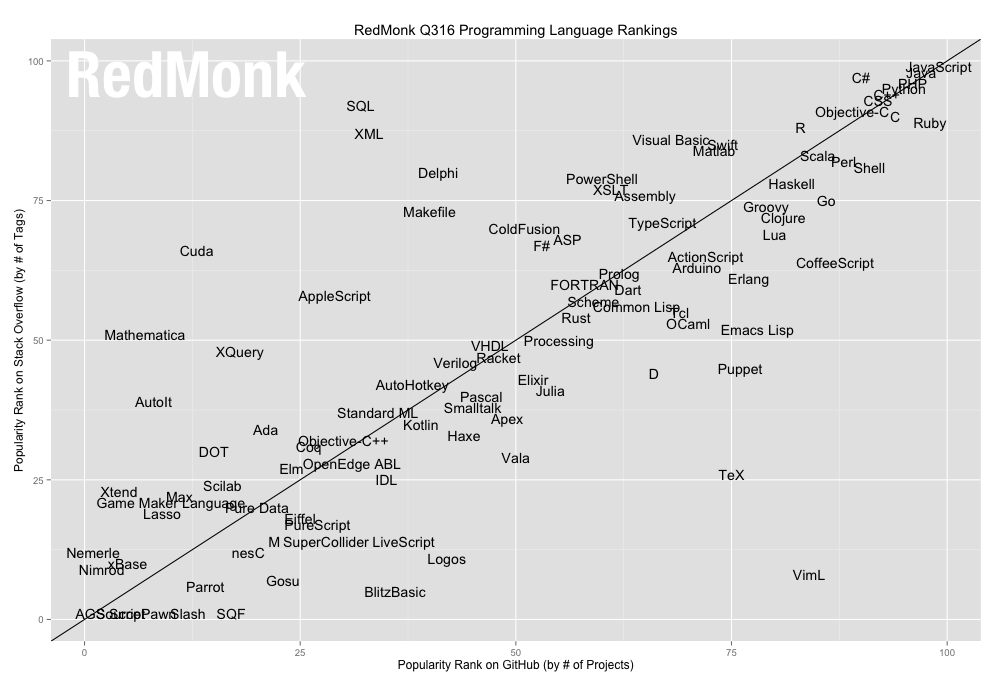

RedMonk’s programming language rankings based on GitHub rankings has the following occupying the top four slots:

1 JavaScript

2 Java

3 PHP

4 Python

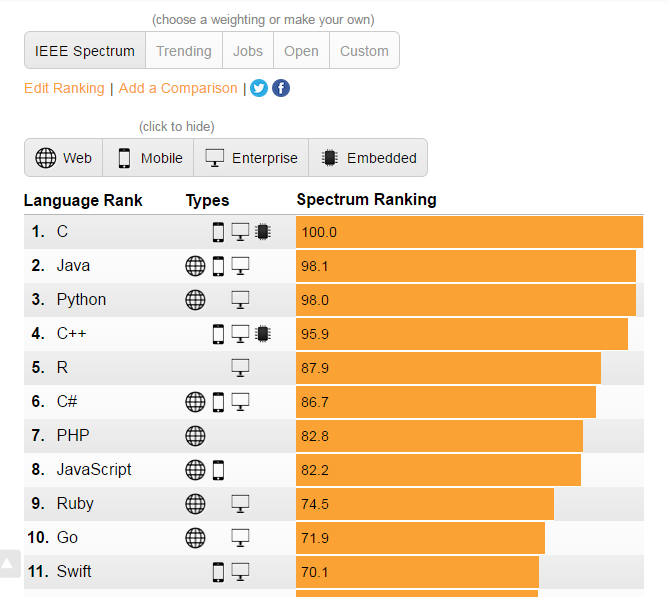

Meanwhile the IEEE use their own analysis to produce this top 10:

There are differences but broadly speaking, based on these analyses, Java, JavaScript, Python, C/C++/C# and PHP remain the big hitters in the language stakes.

Jim Coplien fascinating historical perspective on how Agile and Object Oriented Programming (OOP) have lost their way together is an entertaining and stimulating watch. For Coplien the fundamental issue at stake is the loss of a human-centric perspective. We appear to have lost a connection with the underlying mental model of the customer, the human foundations of development as encapsulated in networks of cooperating objects in runtime achieving the user goal. Feeling, emotion and use cases:

“We don’t sell classes. We sell use cases. Use cases are about conversation. It’s about asking the customer. “People and interactions”.”

Coplien explicitly references the work of Christopher Alexander and introduces the term “habitable software”. Alexander was an architect of the built environment who had a major influence on the field of software architecture, particularly the notion of “design patterns”. It’s a good point to link to a classic Alexander article on how “a city is not a tree” which links to something I wrote about a couple of weeks ago, namely why suburbia sucks – it isn’t really human habitable. Here’s a flavour of his writing:

If we ask a man to name his friends and then ask them in turn to name their friends, they will all name different people, very likely unknown to the first person; these people would again name others, and so on outwards. There are virtually no closed groups of people in modern society. The reality of today’s social structure is thick with overlap – the systems of friends and acquaintances form a semilattice, not a tree

Science

The Joy of Data is an outwardly well-produced BBC4 program covering the history and uses of information in society that’s well worth watching:

“This high-tech romp reveals what data is and how it is captured, stored, shared and made sense of.”

It starts off well outlining the crucial role played by data in assisting William Farr in determining the root cause of cholera, an introduction to Shannon codes and a neat practical explanation of packet switching. However, it seemed to overshoot itself with an intrusive exercise in personal data gathering conducted in a specially constructed observation setup run by Bristol University. Instead of providing an insight into how data can help improve human existence, it seemed to offer a glimpse into a soulless future in which we are reduced to and defined by the vast quantities of telemetry generated by our activity:

“What The Joy of Data failed to explain is how the age of data is also the age of loneliness.”

Quartz on Behavioral Genetics, one of the fastest growing fields of scientific research. It’s a discipline was enabled by the breakthrough in decoding human DNA 16 years ago which has progressed in leaps in the years since powered by big data analysis of twin study data. Perhaps echoing the previous sentiment, it retains a distinct ability to disquiet many people.

The Guardian on the indisputable public health case for disciplined hand washing and the link with disgust.

Sticking with the subject of public health, rather than worry about Brexit, Brits really ought to worry about the long term consequences of a remarkable decline in national walking levels which “have fallen by more than a third in three decades“. At WOMAD this weekend walking was a major part of proceedings and an altogether alternative symbol of American exceptionalism was still on prominent display tie-died decades after his death: